- DELL EQUALLOGIC SAN HQ UNABLE TO CONNECT TO ARRAY UPDATE

- DELL EQUALLOGIC SAN HQ UNABLE TO CONNECT TO ARRAY SERIES

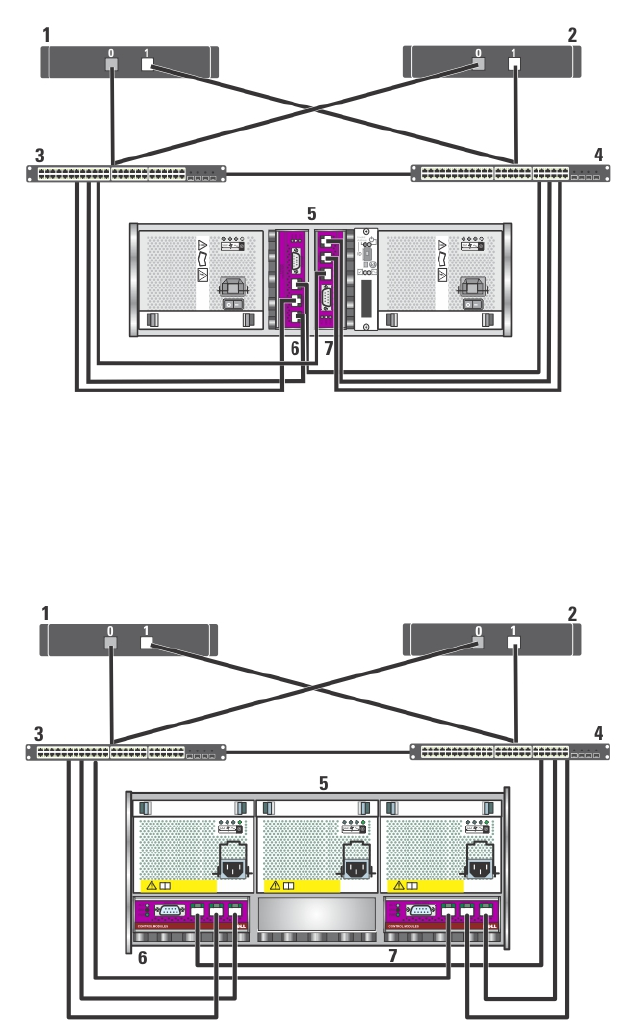

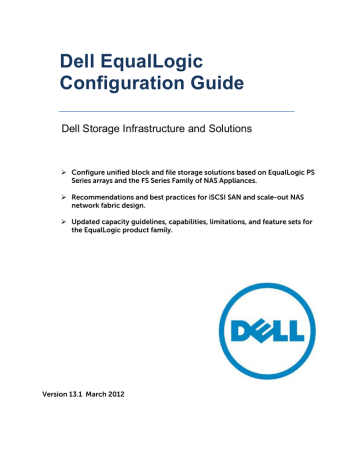

Although this configuration is fully functional, adding more members expands the capacity of the group, increases network bandwidth, and improves overall performance.

DELL EQUALLOGIC SAN HQ UNABLE TO CONNECT TO ARRAY SERIES

Initially, a PS Series group has only one member.

If you are using MEM and the HIT kit, I think a lot of the tuning and performance optimizations are all taken care of, but in our environment we do not have VMware enterprise licensing, so we had to use Round Robin, but there are a few performance tuning configuration changes (change IOPS threshold for Round Robin from 1000 to 3, Disable Delayed ACK and LRO) you can make on the ESXi host that makes a substantial difference especially in response times ... Let me know if anybody is interested in any of these settings and I can post them as well…Ĭurrently I am getting a Maximum throughput (100% Read, 0% Random, 32 Byte packet size) of ~ 234 MBps, IOPS 7485, Average Response time 8.You are here: PS Series Group Administration > Getting Started > Adding Members to an Existing Group Adding Members to an Existing Group If I am not mistaken, when properly configured each vmkernal port assigned to ISCSI traffic on the host creates an individual ISCSI connection to the volumes on the PS series array... Please verify that there are indeed two connections for each volume on the array (this can be done via EQL group manger or via the array console)... You can move the nics one at a time to the new vSwitch if you cannot afford to bring down the host…Īs one of the first troubleshooting tips, enable SSH on the host and run the esxtop command, then press n to display the live performance data of only the network interfaces on that esx host... But before you do this, please note which vmnics you have assigned for ISCSI traffic... I am almost certain, you will only see traffic flowing through only one of the assigned vmnics, hence the kind of performance numbers we all have been seeing (little over 100MBps throughput and around 3200+ IOPS, which by the way is approximately the theoretical maximum of a single gigabit port)... This was happening despite the fact we had Round Robin enabled and it was showing both paths as active (I/O)…Īlso verify this from the controller side of things as well... If you have EqualLogic SAN HQ running in your environment... Look under Network -> Ports… You should see roughly the same amount of data sent and received on both the ISCSI gigabit ports on the controller…

DELL EQUALLOGIC SAN HQ UNABLE TO CONNECT TO ARRAY UPDATE

There still seems to be some weird issues with the VMware ESXi 5 Software ISCSI Initiator even after Update 1 (build 5.0.0, 623860)… So it might not be a bad idea to start with a new vSwitch (especially because you want the Storage Heartbeat needs to be the lowest numbered vmkernal port on that vswitch). I would highly recommend you setup the Storage Heartbeat port as per the recommendations outlined on this document (Needs to be the lowest numbered vmkernal port on the vSwitch, which means this port needs to be created first on the vSwitch, and also enable Jumbo Frames on it if you are using jumbo frames in your environment). I would highly recommend you read carefully “ Configuring iSCSI Connectivity with VMware vSphere 5 and Dell EqualLogic PS Series Storage“… When we first started working on this issue, this document was not even available (they still only had the best practices for vSphere 4.1). That being said, there are a number of things that can cause this issue so i'll try to include some troubleshooting steps that might be helpful in narrowing down cause of the issue in your particular environment. I'm not sure if the switch firmware has anything to do with it but I plan on opening up a TAC case soon to see what they say.Īfter working on this issue for over a month with EqualLogic Level 3 support, Dell PowerEdge Group (responsible for VMware issues within Dell) and Directly with VMware I think we might have found a resolution to this issue. This site is reporting proper flowcontrol on for the storage ports. That site has the same switches (Cisco 3750) but a slightly older firmware (12.2.55 vs 12.2.58). On a side note, I have a replica environment that has a PS6100 and Dell R710 hosts with Intel PT quad-port cards in it for failover (I'm using the same in the primary except it's a PS6500). I would suggest trying this if you haven't already. After I added the Storage Heartbeat VMK, my retransmits fell to <0.1% and my transfer rate is much higher. I was attributing this to the flowcontrol issue that Alex pointed out. For a while my retransmits in SAN HQ have been above normal (<1% but above 0.1% where it should be <0.1%). I did not have this configured (I am using the MEMs). Specifically it mentions the heartbeat VMK for iSCSI. So I've been working on this a lot today and I've made a little progress.